|

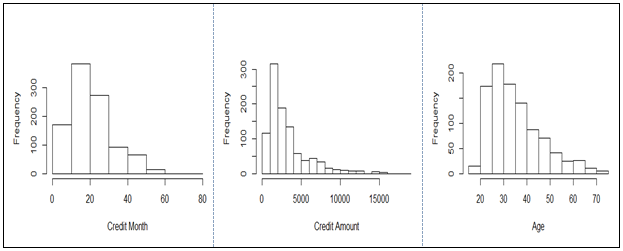

I calculated again the accuracy to find the ideal number of attributes that achieve the best accuracy.Īs we can see in the above plot, we achieve the best accuracy after removing the first eight attributes with the lowest information gain. The next step, is to loop through all attributes and removing one each time from dataset based on the above table. Information gain for each attribute sorted ascending is represented in the following table. In general terms, the information gain is the change in information entropy H from a prior state to a state that takes some information as given: The Random Forests classifier performs better than others two and i am gonna use it for my final prediction. I used 10-Fold Cross Validation method for each of three classifiers to measure their accuracy on multiple parts of train dataset. Data visualizationįor each attribute i used two different plots to represent their data spreading depending on data kind.

The code implemented in Python 3.6 using scikit-learn library. Three classifiers tested, Support Vector Machines (SVM), Random Forests, Naive Bayes, to select the most efficient for our data.

This is an analysis and classification of german credit data (more information at this pdf).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. Archives

June 2023

Categories |

RSS Feed

RSS Feed